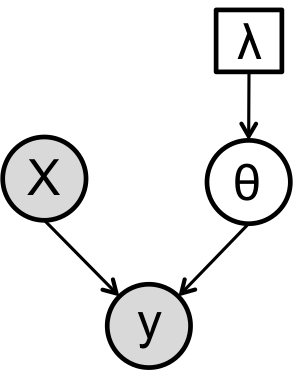

Logistic regression model¶

Likelihood¶

\begin{align*}

y|x, \theta \sim \text{Bernoulli}(\sigma(\theta^T x))

\end{align*}\begin{align*}

\sigma(x) = \frac{1}{1 + \exp(-\theta^T x)}

\end{align*}\begin{align*}

p(y|x, \theta) &= \sigma(\theta^T x)^y * (1 - \sigma(\theta^T x))^{1 - y}\\

&= \frac{\exp(y\theta^T x)}{1 + \exp(\theta^T x)}

\end{align*}\begin{align*}

\log p(y|x, \theta) = y \theta^T x - \log(1 + \exp(\theta^T x))

\end{align*}

Prior¶

\begin{align*}

\theta \sim \mathcal{N}(0, \lambda^{-1})

\end{align*}\begin{align*}

\log p(\theta) = -\frac{\lambda}{2}||\theta||_2^2 + \text{const}

\end{align*}

Objective function¶

\begin{align*}

f(\theta) = -\sum_i \left\{y^i \theta^T x^i - \log(1 + \exp(\theta^T x^i))\right\}

+ \frac{\lambda}{2}||\theta||_2^2

\end{align*}\begin{align*}

\frac{\delta f(\theta)}{\delta \theta_k} &=

-\sum_i \left\{ y^i x^i_k - x^i_k \frac{\exp(\theta^T x^i)}{1 + \exp(\theta^T x^i)} \right\}

+ \lambda\theta_k \\

&= -\sum_i x^i_k \left\{y^i - \frac{1}{1 + \exp(-\theta^T x^i)} \right\}

+ \lambda\theta_k

\end{align*}

Dependencies¶

In [1]:

import numpy as np

import sklearn.metrics as met

import sklearn.datasets as ds

from scipy.optimize import fmin_bfgs

import matplotlib.pyplot as plt

import time

In [2]:

%matplotlib inline

Base class¶

In [3]:

class LogisticRegressionBase(object):

""" Abstract class for logistic regression.

NOTE: Do not modify!

"""

def __init__(self, X, y):

self.X = X

self.y = y

self.n_samples = self.X.shape[0]

self.n_features = self.X.shape[1]

self.lambda_ = 0.05

def f(self, theta):

""" Evaluate objective function f at theta. """

return None

def fprime(self, theta):

""" Compute first derivative of f at theta. """

return None

def _init_params(self):

""" Initialize model parameters. """

self.theta0 = np.random.randn(self.n_features)

def fit(self):

""" Fit theta via gradient descent. """

self._init_params()

self.theta = fmin_bfgs(f=self.f, x0=self.theta0,

fprime=self.fprime)

self._f0 = self.f(self.theta0)

self._f = self.f(self.theta)

print('f(theta0): %.3f' % self._f0)

print('f(theta): %.3f' % self._f)

print('Training accuracy: %.3f' % met.accuracy_score(self.y, self.predict(hard=True)))

def sigmoid(self, x):

return 1 / (1 + np.exp(-x))

def predict(self, X=None, hard=False):

if X is None:

X = self.X

p = self.sigmoid(X.dot(self.theta))

return np.round(p) if hard else p

Hands on: Implement logistic regression!¶

In [4]:

class LogisticRegressionYours(LogisticRegressionBase):

""" Your implementation of logistic regression."""

def f(self, theta):

""" Evaluate objective function f at theta.

Parameters

----------

theta: parameter vector (self.n_features)

self.X: design matrix (self.n_samples x self.n_features)

self.y: labels (self.n_features)

self.lambda_: regularization paramter

"""

X = self.X

y = self.y

lambda_ = self.lambda_

# TODO: return f(theta)

raise NotImplementedError()

def fprime(self, theta):

""" Compute first derivative of f at theta.

Parameters

----------

theta: parameter vector (self.n_features)

self.X: design matrix (self.n_samples x self.n_features)

self.y: labels (self.n_features)

self.lambda_: regularization paramter

"""

X = self.X

y = self.y

lambda_ = self.lambda_

# TODO: return fprime(theta)

raise NotImplementedError()

Reference Implementation¶

In [5]:

class LogisticRegressionReference(LogisticRegressionBase):

def f(self, theta):

z = self.X.dot(theta)

rv = -np.sum(self.y * z - np.log(1 + np.exp(z)))

rv += 0.5 * self.lambda_ * theta.dot(theta)

return rv

def fprime(self, theta):

div = self.y - 1 / (1 + np.exp(-self.X.dot(theta)))

return -np.sum(self.X * div.reshape(-1, 1), axis=0) + self.lambda_ * theta

Naive implementation¶

In [6]:

class LogisticRegressionNaive(LogisticRegressionBase):

def f(self, theta):

rv = 0.0

for i in range(self.n_samples):

rv -= self.y[i] * self.X[i].dot(theta) - np.log(1 + np.exp(self.X[i].dot(theta)))

rv += self.lambda_ * theta.dot(theta)

return rv

def fprime(self, theta):

prime = np.empty(theta.size)

for k in range(self.n_features):

prime[k] = 0.0

for i in range(self.n_samples):

prime[k] -= self.y[i] * self.X[i, k] - self.X[i, k] * \

np.exp(theta.dot(self.X[i])) / (1 + np.exp(theta.dot(self.X[i])))

prime[k] += self.lambda_ * theta[k]

return prime

Data¶

In [7]:

# Randomly generate classification dataset

np.random.seed(0)

n_samples = 1000

n_features = 100

X, y = ds.make_classification(n_samples=n_samples,

n_features=n_features,

n_informative=int(n_features / 2))

Evaluation¶

In [8]:

def evaluate(lr):

""" Evaluate performance of logistic regression model. """

t0 = time.clock()

lr.fit()

t1 = time.clock()

print('Fitting time (sec): %d' % (t1 - t0))

print('Accuracy: %.3f' % (met.accuracy_score(lr.y, lr.predict(hard=True))))

print('AUC: %.3f' % (met.roc_auc_score(lr.y, lr.predict())))

fpr, tpr, thr = met.roc_curve(lr.y, lr.predict())

fig, ax = plt.subplots(figsize=(10, 7))

ax.plot(fpr, tpr, linewidth=3)

ax.set_xlabel('False positive rate')

ax.set_ylabel('True positive rate')

ax.grid()

Reference implementation¶

In [9]:

# Evaluate reference implementation

evaluate(LogisticRegressionReference(X, y))

Optimization terminated successfully.

Current function value: 391.096883

Iterations: 146

Function evaluations: 188

Gradient evaluations: 188

f(theta0): 13107.042

f(theta): 391.097

Training accuracy: 0.819

Fitting time (sec): 0

Accuracy: 0.819

AUC: 0.905

-c:4: RuntimeWarning: overflow encountered in exp -c:9: RuntimeWarning: overflow encountered in exp

Naive implementation¶

In [12]:

# Evaluate naive implementation

evaluate(LogisticRegressionNaive(X, y))

Warning: Desired error not necessarily achieved due to precision loss.

Current function value: 395.728108

Iterations: 90

Function evaluations: 137

Gradient evaluations: 125

f(theta0): 11131.183

f(theta): 395.728

Training accuracy: 0.825

Fitting time (sec): 108

Accuracy: 0.825

AUC: 0.903

Your implementation¶

In [ ]:

# Evaluate your implementation

evaluate(LogisticRegressionYours(X, y))