1. Preparing the environment¶

1.1. A brief introduction¶

This is a case study I proposed in my B.Sc. Computer Science's thesis. The dataset used here was provided by Chris Whong. So, thank you Chris for offering this dataset to the public, and also to noobs in machine learning like me, especially after how you got it.

As a little abstract, what is achieved at the end of this series of notebooks is to predict if the tip of a trip in a NYC taxi it's going to be less than 20% or greater than or equal to 20% of the charge, with an accuracy of 71.74%, without the possibility to use information about the passengers, a essential data for trying to accomplish this task.

1.2. The tools¶

The libraries you need for running these notebooks in your machine, besides IPython Notebook, are:

Also, for my thesis I created BMO, a Machine Learning toolbox based on Docker and IPython Notebook that creates an isolated Linux environment with all the libraries you need.

1.3. The data¶

The data used in this study is the information offered by the NYC Taxi and Limousine Commission from the taxi's trips of NYC in 2013. A total amount of 173,179,759 trips in 48.6GB of uncompressed CSV files!

You can download the dataset from this Chris's post. It's offered as a compressed set of 24 CSV files, 2 per month of 2013. The first of this two files is the information about the trip's fares. The second one have the physical information of the trips, data like trip time and pickup and dropoff GPS coordinates.

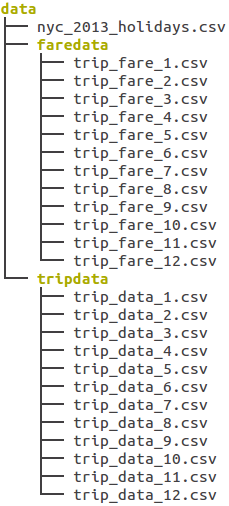

After download all the files, the way for use this data in these notebooks is to place the files inside the data folder, as shown in the next figure:

As you can see, it exists a strange file called nyc_2013_holidays.csv. This file, used in a few steps ahead, contains the list of the NYC holidays, extracted from the nyc.gov website.

Let's start to analyze this dataset by checking out the [next notebook](2. Exploring a subdataset.ipynb).