Python for Data Science¶

Joe McCarthy, Data Scientist, Indeed

from IPython.display import display, Image, HTML

Navigation¶

Notebooks in this primer:

- Introduction

- Data Science: Basic Concepts (you are here)

- Python: Basic Concepts

- Using Python to Build and Use a Simple Decision Tree Classifier

- Next Steps

2. Data Science: Basic Concepts¶

Data Science and Data Mining¶

Foster Provost and Tom Fawcett offer succinct descriptions of data science and data mining in Data Science for Business:

Foster Provost and Tom Fawcett offer succinct descriptions of data science and data mining in Data Science for Business:

Data science involves principles, processes and techniques for understanding phenomena via the (automated) analysis of data.

Data mining is the extraction of knowledge from data, via technologies that incorporate these principles.

Knowledge Discovery, Data Mining and Machine Learning¶

Provost & Fawcett also offer some history and insights into the relationship between data mining and machine learning, terms which are often used somewhat interchangeably:

The field of Data Mining (or KDD: Knowledge Discovery and Data Mining) started as an offshoot of Machine Learning, and they remain closely linked. Both fields are concerned with the analysis of data to find useful or informative patterns. Techniques and algorithms are shared between the two; indeed, the areas are so closely related that researchers commonly participate in both communities and transition between them seamlessly. Nevertheless, it is worth pointing out some of the differences to give perspective.

Speaking generally, because Machine Learning is concerned with many types of performance improvement, it includes subfields such as robotics and computer vision that are not part of KDD. It also is concerned with issues of agency and cognition — how will an intelligent agent use learned knowledge to reason and act in its environment — which are not concerns of Data Mining.

Historically, KDD spun off from Machine Learning as a research field focused on concerns raised by examining real-world applications, and a decade and a half later the KDD community remains more concerned with applications than Machine Learning is. As such, research focused on commercial applications and business issues of data analysis tends to gravitate toward the KDD community rather than to Machine Learning. KDD also tends to be more concerned with the entire process of data analytics: data preparation, model learning, evaluation, and so on.

Cross Industry Standard Process for Data Mining (CRISP-DM)¶

The Cross Industry Standard Process for Data Mining introduced a process model for data mining in 2000 that has become widely adopted.

The model emphasizes the *iterative* nature of the data mining process, distinguishing several different stages that are regularly revisited in the course of developing and deploying data-driven solutions to business problems:

- Business understanding

- Data understanding

- Data preparation

- Modeling

- Deployment

We will be focusing primarily on using Python for data preparation and modeling.

Data Science Workflow¶

Philip Guo presents a Data Science Workflow offering a slightly different process model emhasizing the importance of reflection and some of the meta-data, data management and bookkeeping challenges that typically arise in the data science process. His 2012 PhD thesis, Software Tools to Facilitate Research Programming, offers an insightful and more comprehensive description of many of these challenges.

Provost & Fawcett list a number of different tasks in which data science techniques are employed:

- Classification and class probability estimation

- Regression (aka value estimation)

- Similarity matching

- Clustering

- Co-occurrence grouping (aka frequent itemset mining, association rule discovery, market-basket analysis)

- Profiling (aka behavior description, fraud / anomaly detection)

- Link prediction

- Data reduction

- Causal modeling

We will be focusing primarily on classification and class probability estimation tasks, which are defined by Provost & Fawcett as follows:

Classification and class probability estimation attempt to predict, for each individual in a population, which of a (small) set of classes this individual belongs to. Usually the classes are mutually exclusive. An example classification question would be: “Among all the customers of MegaTelCo, which are likely to respond to a given offer?” In this example the two classes could be called will respond and will not respond.

To further simplify this primer, we will focus exclusively on supervised methods, in which the data is explicitly labeled with classes. There are also unsupervised methods that involve working with data in which there are no pre-specified class labels.

Supervised Classification¶

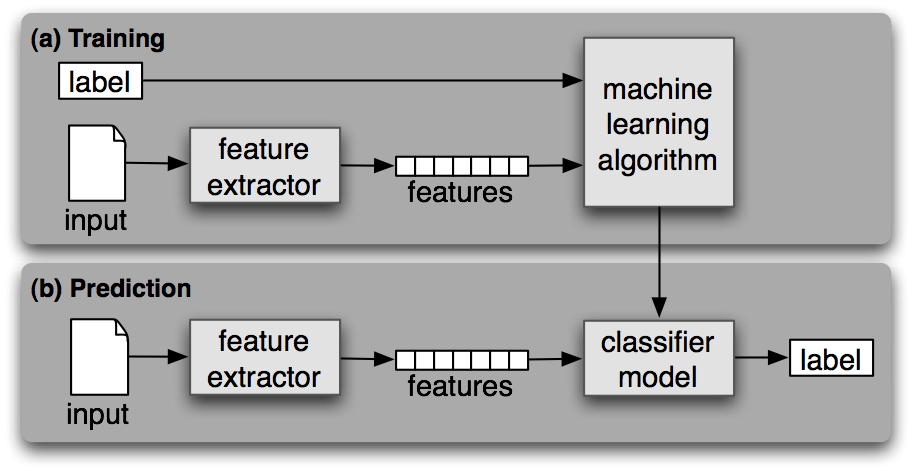

The Natural Language Toolkit (NLTK) book provides a diagram and succinct description (below, with italics and bold added for emphasis) of supervised classification:

Supervised Classification. (a) During training, a feature extractor is used to convert each input value to a feature set. These feature sets, which capture the basic information about each input that should be used to classify it, are discussed in the next section. Pairs of feature sets and labels are fed into the machine learning algorithm to generate a model. (b) During prediction, the same feature extractor is used to convert unseen inputs to feature sets. These feature sets are then fed into the model, which generates predicted labels.

Data Mining Terminology¶

- Structured data has simple, well-defined patterns (e.g., a table or graph)

- Unstructured data has less well-defined patterns (e.g., text, images)

- Model: a pattern that captures / generalizes regularities in data (e.g., an equation, set of rules, decision tree)

- Attribute (aka variable, feature, signal, column): an element used in a model

- Instance (aka example, feature vector, row): a representation of a single entity being modeled

- Target attribute (aka dependent variable, class label): the class / type / category of an entity being modeled

Data Mining Example: UCI Mushroom dataset¶

The Center for Machine Learning and Intelligent Systems at the University of California, Irvine (UCI), hosts a Machine Learning Repository containing over 200 publicly available data sets.

We will use the mushroom data set, which forms the basis of several examples in Chapter 3 of the Provost & Fawcett data science book.

We will use the mushroom data set, which forms the basis of several examples in Chapter 3 of the Provost & Fawcett data science book.

The following description of the dataset is provided at the UCI repository:

This data set includes descriptions of hypothetical samples corresponding to 23 species of gilled mushrooms in the Agaricus and Lepiota Family (pp. 500-525 [The Audubon Society Field Guide to North American Mushrooms, 1981]). Each species is identified as definitely edible, definitely poisonous, or of unknown edibility and not recommended. This latter class was combined with the poisonous one. The Guide clearly states that there is no simple rule for determining the edibility of a mushroom; no rule like leaflets three, let it be'' for Poisonous Oak and Ivy.

Number of Instances: 8124

Number of Attributes: 22 (all nominally valued)

Attribute Information: (classes: edible=e, poisonous=p)

- cap-shape: bell=b, conical=c, convex=x, flat=f, knobbed=k, sunken=s

- cap-surface: fibrous=f, grooves=g, scaly=y, smooth=s

- cap-color: brown=n ,buff=b, cinnamon=c, gray=g, green=r, pink=p, purple=u, red=e, white=w, yellow=y

- bruises?: bruises=t, no=f

- odor: almond=a, anise=l, creosote=c, fishy=y, foul=f, musty=m, none=n, pungent=p, spicy=s

- gill-attachment: attached=a, descending=d, free=f, notched=n

- gill-spacing: close=c, crowded=w, distant=d

- gill-size: broad=b, narrow=n

- gill-color: black=k, brown=n, buff=b, chocolate=h, gray=g, green=r, orange=o, pink=p, purple=u, red=e, white=w, yellow=y

- stalk-shape: enlarging=e, tapering=t

- stalk-root: bulbous=b, club=c, cup=u, equal=e, rhizomorphs=z, rooted=r, missing=?

- stalk-surface-above-ring: fibrous=f, scaly=y, silky=k, smooth=s

- stalk-surface-below-ring: fibrous=f, scaly=y, silky=k, smooth=s

- stalk-color-above-ring: brown=n, buff=b, cinnamon=c, gray=g, orange=o, pink=p, red=e, white=w, yellow=y

- stalk-color-below-ring: brown=n, buff=b, cinnamon=c, gray=g, orange=o, pink=p, red=e, white=w, yellow=y

- veil-type: partial=p, universal=u

- veil-color: brown=n, orange=o, white=w, yellow=y

- ring-number: none=n, one=o, two=t

- ring-type: cobwebby=c, evanescent=e, flaring=f, large=l, none=n, pendant=p, sheathing=s, zone=z

- spore-print-color: black=k, brown=n, buff=b, chocolate=h, green=r, orange=o, purple=u, white=w, yellow=y

- population: abundant=a, clustered=c, numerous=n, scattered=s, several=v, solitary=y

- habitat: grasses=g, leaves=l, meadows=m, paths=p, urban=u, waste=w, woods=d

Missing Attribute Values: 2480 of them (denoted by "?"), all for attribute #11.

Class Distribution: -- edible: 4208 (51.8%) -- poisonous: 3916 (48.2%) -- total: 8124 instances

The data file associated with this dataset has one instance of a hypothetical mushroom per line, with abbreviations for the values of the class and each of the other 22 attributes separated by commas.

Here is a sample line from the data file:

p,k,f,n,f,n,f,c,n,w,e,?,k,y,w,n,p,w,o,e,w,v,d

This instance represents a mushroom with the following attribute values (highlighted in bold):

class: edible=e, poisonous=p

- cap-shape: bell=b, conical=c, convex=x, flat=f, knobbed=k, sunken=s

- cap-surface: fibrous=f, grooves=g, scaly=y, smooth=s

- cap-color: brown=n ,buff=b, cinnamon=c, gray=g, green=r, pink=p, purple=u, red=e, white=w, yellow=y

- bruises?: bruises=t, no=f

- odor: almond=a, anise=l, creosote=c, fishy=y, foul=f, musty=m, none=n, pungent=p, spicy=s

- gill-attachment: attached=a, descending=d, free=f, notched=n

- gill-spacing: close=c, crowded=w, distant=d

- gill-size: broad=b, narrow=n

- gill-color: black=k, brown=n, buff=b, chocolate=h, gray=g, green=r, orange=o, pink=p, purple=u, red=e, white=w, yellow=y

- stalk-shape: enlarging=e, tapering=t

- stalk-root: bulbous=b, club=c, cup=u, equal=e, rhizomorphs=z, rooted=r, missing=?

- stalk-surface-above-ring: fibrous=f, scaly=y, silky=k, smooth=s

- stalk-surface-below-ring: fibrous=f, scaly=y, silky=k, smooth=s

- stalk-color-above-ring: brown=n, buff=b, cinnamon=c, gray=g, orange=o, pink=p, red=e, white=w, yellow=y

- stalk-color-below-ring: brown=n, buff=b, cinnamon=c, gray=g, orange=o, pink=p, red=e, white=w, yellow=y

- veil-type: partial=p, universal=u

- veil-color: brown=n, orange=o, white=w, yellow=y

- ring-number: none=n, one=o, two=t

- ring-type: cobwebby=c, evanescent=e, flaring=f, large=l, none=n, pendant=p, sheathing=s, zone=z

- spore-print-color: black=k, brown=n, buff=b, chocolate=h, green=r, orange=o, purple=u, white=w, yellow=y

- population: abundant=a, clustered=c, numerous=n, scattered=s, several=v, solitary=y

- habitat: grasses=g, leaves=l, meadows=m, paths=p, urban=u, waste=w, woods=d

Building a model with this data set will serve as a motivating example throughout much of this primer.

Navigation¶

Notebooks in this primer:

- Introduction

- Data Science: Basic Concepts (you are here)

- Python: Basic Concepts

- Using Python to Build and Use a Simple Decision Tree Classifier

- Next Steps