PLOS Cloud Explorer: The Process¶

This notebook is about our process for figuring out what PLOS Cloud Explorer was going to be. It includes early code, prototypes, and dead ends.

For the full story including the happy ending, read this document and follow the other notebook links to see the code we actually used.

First things first. All imports for this notebook:

from __future__ import unicode_literals

# You need an API Key for PLOS

import settings

# Data analysis

import numpy as np

import pandas as pd

from numpy import nan

from pandas import Series, DataFrame

# Interacting with API

import requests

import urllib

import time

from retrying import retry

import os

import random

import json

# Natural language processing

import nltk

from nltk.collocations import BigramCollocationFinder

from nltk.metrics import BigramAssocMeasures

from nltk.corpus import stopwords

import string

# For the IPython widgets:

from IPython.display import display, Image, HTML, clear_output

from IPython.html import widgets

from jinja2 import Template

Data Collection¶

We began with a really simple way of getting article data from the PLOS Search API:

r = requests.get('http://api.plos.org/search?q=subject:"biotechnology"&start=0&rows=500&api_key={%s}&wt=json' % settings.PLOS_KEY).json()

len(r['response']['docs'])

500

# Write out a file.

with open('biotech500.json', 'wb') as fp:

json.dump(r, fp)

We later developed a much more sophisticated way to get huge amounts of data from the API. To see how we collected data sets, see the batch data collection notebook.

Exploring Output¶

Here we show what the output looks like, from a previously run API query. Through the magic of Python, we can pickle the resulting DataFrame and access it again now without making any API calls.

abstract_df = pd.read_pickle('../data/abstract_df.pkl')

len(list(abstract_df.author))

1120

print list(abstract_df.subject)[0]

[u'/Computer and information sciences/Information technology/Data processing', u'/Computer and information sciences/Information technology/Data reduction', u'/Physical sciences/Mathematics/Statistics (mathematics)/Statistical methods', u'/Research and analysis methods/Mathematical and statistical techniques/Statistical methods', u'/Computer and information sciences/Information technology/Databases', u'/Physical sciences/Mathematics/Statistics (mathematics)/Statistical data', u'/Computer and information sciences/Computer architecture/User interfaces', u'/Medicine and health sciences/Infectious diseases/Infectious disease control', u'/Computer and information sciences/Data management']

abstract_df.tail()

| abstract | author | id | journal | publication_date | score | subject | title_display | |

|---|---|---|---|---|---|---|---|---|

| 15 | [\nPopulation structure can confound the ident... | [Jonathan Carlson, Carl Kadie, Simon Mallal, D... | 10.1371/journal.pone.0000591 | PLoS ONE | 2007-07-04T00:00:00Z | 0.443733 | [/Biology and life sciences/Genetics/Phenotype... | Leveraging Hierarchical Population Structure i... |

| 16 | [\n The discrimination of thatcherized ... | [Nick Donnelly, Nicole R Zürcher, Katherine Co... | 10.1371/journal.pone.0023340 | PLoS ONE | 2011-08-31T00:00:00Z | 0.443733 | [/Medicine and health sciences/Diagnostic medi... | Discriminating Grotesque from Typical Faces: E... |

| 17 | [\nInfluenza viruses have been responsible for... | [Zhipeng Cai, Tong Zhang, Xiu-Feng Wan] | 10.1371/journal.pcbi.1000949 | PLoS Computational Biology | 2010-10-07T00:00:00Z | 0.443733 | [/Biology and life sciences/Organisms/Viruses/... | A Computational Framework for Influenza Antige... |

| 18 | [\n Based on previous evidence for indi... | [Luis F H Basile, João R Sato, Milkes Y Alvare... | 10.1371/journal.pone.0059595 | PLoS ONE | 2013-03-27T00:00:00Z | 0.443733 | [/Medicine and health sciences/Diagnostic medi... | Lack of Systematic Topographic Difference betw... |

| 19 | [Objective: Herpes simplex virus type 2 (HSV-2... | [Alison C Roxby, Alison L Drake, Francisca Ong... | 10.1371/journal.pone.0038622 | PLoS ONE | 2012-06-12T00:00:00Z | 0.443733 | [/Medicine and health sciences/Women's health/... | Effects of Valacyclovir on Markers of Disease ... |

5 rows × 8 columns

Initial attempts to make word clouds using abstracts¶

We wanted to use basic natural language processing (NLP) to make word clouds out of aggregated abstract text, and see how they change over time.

NB: These examples use a previously collected dataset that's different and smaller than the one we generated above.

# Globally define a set of stopwords.

stops = set(stopwords.words('english'))

# We can add science-y stuff to it as well. Just an example:

stops.add('conclusions')

def wordify(abs_list, min_word_len=2):

'''

Convert the abstract field from PLoS API data to a filtered list of words.

'''

# The abstract field is a list. Make it a string.

text = ' '.join(abs_list).strip(' \n\t')

if text == '':

return nan

else:

# Remove punctuation & replace with space,

# because we want 'metal-contaminated' => 'metal contaminated'

# ...not 'metalcontaminated', and so on.

for c in string.punctuation:

text = text.replace(c, ' ')

# Now make it a Series of words, and do some cleaning.

words = Series(text.split(' '))

words = words.str.lower()

# Filter out words less than minimum word length.

words = words[words.str.len() >= min_word_len]

words = words[~words.str.contains(r'[^#@a-z]')] # What exactly does this do?

# Filter out globally-defined stopwords

ignore = stops & set(words.unique())

words_out = [w for w in words.tolist() if w not in ignore]

return words_out

Load up some data.

with open('biotech500.json', 'rb') as fp:

data = json.load(fp)

articles_list = data['response']['docs']

articles = DataFrame(articles_list)

articles = articles[articles['abstract'].notnull()]

articles.head()

| abstract | article_type | author_display | eissn | id | journal | publication_date | score | title_display | |

|---|---|---|---|---|---|---|---|---|---|

| 7 | [\nThe objective of this paper is to assess th... | Research Article | [Latifah Amin, Md. Abul Kalam Azad, Mohd Hanaf... | 1932-6203 | 10.1371/journal.pone.0086174 | PLoS ONE | 2014-01-29T00:00:00Z | 1.211935 | Determinants of Public Attitudes to Geneticall... |

| 16 | [\n Atrazine (ATZ) and S-metolachlor (S... | Research Article | [Cristina A. Viegas, Catarina Costa, Sandra An... | 1932-6203 | 10.1371/journal.pone.0037140 | PLoS ONE | 2012-05-15T00:00:00Z | 1.119538 | Does <i>S</i>-Metolachlor Affect the Performan... |

| 17 | [\nDue to environmental persistence and biotox... | Research Article | [Yonggang Yang, Meiying Xu, Zhili He, Jun Guo,... | 1932-6203 | 10.1371/journal.pone.0070686 | PLoS ONE | 2013-08-05T00:00:00Z | 1.119538 | Microbial Electricity Generation Enhances Deca... |

| 34 | [\n Intensive use of chlorpyrifos has r... | Research Article | [Shaohua Chen, Chenglan Liu, Chuyan Peng, Hong... | 1932-6203 | 10.1371/journal.pone.0047205 | NaN | 2012-10-08T00:00:00Z | 1.119538 | Biodegradation of Chlorpyrifos and Its Hydroly... |

| 35 | [Background: The complex characteristics and u... | Research Article | [Zhongbo Zhou, Fangang Meng, So-Ryong Chae, Gu... | 1932-6203 | 10.1371/journal.pone.0042270 | NaN | 2012-08-09T00:00:00Z | 0.989541 | Microbial Transformation of Biomacromolecules ... |

5 rows × 9 columns

Applying this to the whole DataFrame of articles

articles['words'] = articles.apply(lambda s: wordify(s['abstract'] + [s['title_display']]), axis=1)

articles.drop(['article_type', 'score', 'title_display', 'abstract'], axis=1, inplace=True)

articles.head()

| author_display | eissn | id | journal | publication_date | words | |

|---|---|---|---|---|---|---|

| 7 | [Latifah Amin, Md. Abul Kalam Azad, Mohd Hanaf... | 1932-6203 | 10.1371/journal.pone.0086174 | PLoS ONE | 2014-01-29T00:00:00Z | [objective, paper, assess, attitude, malaysian... |

| 16 | [Cristina A. Viegas, Catarina Costa, Sandra An... | 1932-6203 | 10.1371/journal.pone.0037140 | PLoS ONE | 2012-05-15T00:00:00Z | [atrazine, atz, metolachlor, met, two, herbici... |

| 17 | [Yonggang Yang, Meiying Xu, Zhili He, Jun Guo,... | 1932-6203 | 10.1371/journal.pone.0070686 | PLoS ONE | 2013-08-05T00:00:00Z | [due, environmental, persistence, biotoxicity,... |

| 34 | [Shaohua Chen, Chenglan Liu, Chuyan Peng, Hong... | 1932-6203 | 10.1371/journal.pone.0047205 | NaN | 2012-10-08T00:00:00Z | [intensive, use, chlorpyrifos, resulted, ubiqu... |

| 35 | [Zhongbo Zhou, Fangang Meng, So-Ryong Chae, Gu... | 1932-6203 | 10.1371/journal.pone.0042270 | NaN | 2012-08-09T00:00:00Z | [background, complex, characteristics, unclear... |

5 rows × 6 columns

Doing some natural language processing¶

abs_df = DataFrame(articles['words'].apply(lambda x: ' '.join(x)).tolist(), columns=['text'])

abs_df.head()

| text | |

|---|---|

| 0 | objective paper assess attitude malaysian stak... |

| 1 | atrazine atz metolachlor met two herbicides wi... |

| 2 | due environmental persistence biotoxicity poly... |

| 3 | intensive use chlorpyrifos resulted ubiquitous... |

| 4 | background complex characteristics unclear bio... |

5 rows × 1 columns

Common word pairs¶

This section uses all words from abstracts to find the common word pairs.

#include all words from abstracts for getting common word pairs

words_all = pd.Series(' '.join(abs_df['text']).split(' '))

words_all.value_counts()

study 56 using 33 two 32 patients 31 biodegradation 30 non 29 data 28 three 28 analysis 27 compared 27 soil 27 new 27 results 26 species 25 cell 25 ... engage 1 thermal 1 geochip 1 dominant 1 suggests 1 third 1 usually 1 locomotion 1 rpos 1 scales 1 prefer 1 quite 1 protocatechuate 1 routine 1 agr 1 Length: 3028, dtype: int64

relevant_words_pairs = words_all.copy()

relevant_words_pairs.value_counts()

study 56 using 33 two 32 patients 31 biodegradation 30 non 29 data 28 three 28 analysis 27 compared 27 soil 27 new 27 results 26 species 25 cell 25 ... engage 1 thermal 1 geochip 1 dominant 1 suggests 1 third 1 usually 1 locomotion 1 rpos 1 scales 1 prefer 1 quite 1 protocatechuate 1 routine 1 agr 1 Length: 3028, dtype: int64

bcf = BigramCollocationFinder.from_words(relevant_words_pairs)

for pair in bcf.nbest(BigramAssocMeasures.likelihood_ratio, 30):

print ' '.join(pair)

synthetic biology spider silk es cell adjacent segment medical imaging dp dtmax security privacy industry backgrounds removal initiation uv irradiated gm salmon persistent crsab antimicrobial therapy limb amputation cellular phone wireless powered minimally invasive phone technology heavy metals battery powered composite mesh frequency currents genetically modified tissue engineering catheter removal acting reversible brassica napus brown streak quasi stiffness data code

bcf.nbest(BigramAssocMeasures.likelihood_ratio, 20)

[(u'synthetic', u'biology'), (u'spider', u'silk'), (u'es', u'cell'), (u'adjacent', u'segment'), (u'medical', u'imaging'), (u'dp', u'dtmax'), (u'security', u'privacy'), (u'industry', u'backgrounds'), (u'removal', u'initiation'), (u'uv', u'irradiated'), (u'gm', u'salmon'), (u'persistent', u'crsab'), (u'antimicrobial', u'therapy'), (u'limb', u'amputation'), (u'cellular', u'phone'), (u'wireless', u'powered'), (u'minimally', u'invasive'), (u'phone', u'technology'), (u'heavy', u'metals'), (u'battery', u'powered')]

Making word clouds: select the top words¶

Here, we takes only unique words from each abstract.

abs_set_df = DataFrame(articles['words'].apply(lambda x: ' '.join(set(x))).tolist(), columns=['text'])

abs_set_df.head()

| text | |

|---|---|

| 0 | among developed attitude paper identify accept... |

| 1 | aquatic mineralization dose experiments still ... |

| 2 | mfc hypothesized distinctly results nitrogen s... |

| 3 | fungal contaminant tcp accumulative gc morphol... |

| 4 | origin humic mineralization show mainly result... |

5 rows × 1 columns

words = pd.Series(' '.join(abs_set_df['text']).split(' '))

words.value_counts()

study 38 two 23 using 21 results 20 three 20 analysis 20 compared 17 used 16 higher 16 may 16 non 15 based 15 significantly 14 also 14 however 14 ... septal 1 recommendations 1 genomes 1 poking 1 gck 1 optimised 1 varied 1 counting 1 monitoring 1 malware 1 tmc 1 rape 1 occur 1 conversely 1 cda 1 Length: 3028, dtype: int64

top_words = words.value_counts().reset_index()

top_words.columns = ['word', 'count']

top_words.head(15)

| word | count | |

|---|---|---|

| 0 | study | 38 |

| 1 | two | 23 |

| 2 | using | 21 |

| 3 | results | 20 |

| 4 | three | 20 |

| 5 | analysis | 20 |

| 6 | compared | 17 |

| 7 | used | 16 |

| 8 | higher | 16 |

| 9 | may | 16 |

| 10 | non | 15 |

| 11 | based | 15 |

| 12 | significantly | 14 |

| 13 | also | 14 |

| 14 | however | 14 |

15 rows × 2 columns

Exporting word count data as CSV for D3 word-cloudification¶

# top_words.to_csv('../wordcloud2.csv', index=False)

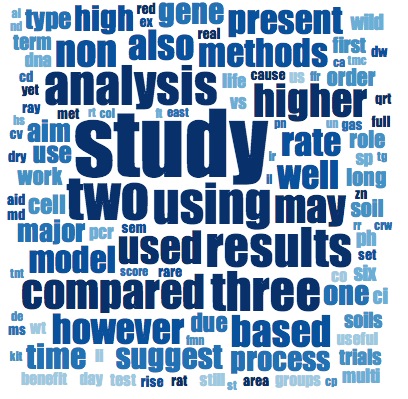

Initial word cloud results¶

When we created the word clouds, we noticed something about the most common words in these article abstracts...

Change over time: working with article abstracts as time series data¶

articles_list = data['response']['docs']

articles = DataFrame(articles_list)

articles = articles[articles['abstract'].notnull()].ix[:,['abstract', 'publication_date']]

articles.abstract = articles.abstract.apply(wordify, 3)

articles = articles[articles['abstract'].notnull()]

articles.publication_date = pd.to_datetime(articles.publication_date)

articles.head()

| abstract | publication_date | |

|---|---|---|

| 7 | [objective, paper, assess, attitude, malaysian... | 2014-01-29 |

| 16 | [atrazine, atz, metolachlor, met, two, herbici... | 2012-05-15 |

| 17 | [due, environmental, persistence, biotoxicity,... | 2013-08-05 |

| 34 | [intensive, use, chlorpyrifos, resulted, ubiqu... | 2012-10-08 |

| 35 | [background, complex, characteristics, unclear... | 2012-08-09 |

5 rows × 2 columns

print articles.publication_date.min(), articles.publication_date.max()

print len(articles)

2008-04-30 00:00:00 2014-04-11 00:00:00 57

The time series spans ~9 years with 57 data points. We need to resample!

There are probably many ways to do this...

articles_timed = articles.set_index('publication_date')

articles_timed.head()

| abstract | |

|---|---|

| publication_date | |

| 2014-01-29 | [objective, paper, assess, attitude, malaysian... |

| 2012-05-15 | [atrazine, atz, metolachlor, met, two, herbici... |

| 2013-08-05 | [due, environmental, persistence, biotoxicity,... |

| 2012-10-08 | [intensive, use, chlorpyrifos, resulted, ubiqu... |

| 2012-08-09 | [background, complex, characteristics, unclear... |

5 rows × 1 columns

Using pandas time series resampling functions¶

Using the sum aggregation method works because all the values were lists. The three abstracts published in 2013-05 were concatenated together (see below).

articles_monthly = articles_timed.resample('M', how='sum', fill_method='ffill', kind='period')

articles_monthly.abstract = articles_monthly.abstract.apply(lambda x: np.nan if x == 0 else x)

articles_monthly.fillna(method='ffill', inplace=True)

articles_monthly.head()

| abstract | |

|---|---|

| publication_date | |

| 2008-04 | [according, world, health, organization, repor... |

| 2008-05 | [according, world, health, organization, repor... |

| 2008-06 | [according, world, health, organization, repor... |

| 2008-07 | [according, world, health, organization, repor... |

| 2008-08 | [according, world, health, organization, repor... |

5 rows × 1 columns

Making a time slider for abstract text¶

widgetmax = len(articles_monthly) - 1

def textbarf(t):

html_template = """

<style>

#textbarf {

display: block;

width: 666px;

padding: 23px;

background-color: #ddeeff;

}

</style>

<div id="textbarf"> {{blargh}} </div>"""

blob = ' '.join(articles_monthly.ix[t]['abstract'])

html_src = Template(html_template).render(blargh=blob)

display(HTML(html_src))

widgets.interact(textbarf,

t=widgets.IntSliderWidget(min=0,max=widgetmax,step=1,value=42),

)

<function __main__.textbarf>