Solving $ A x = b $ via Blocked LU Factorization¶

In this notebook, you will implement a blocked LU factorization, solve a system with a unit lower triangular matrix, and solve a system with an upper triangular matrix. This notebook culminates in a routine that combines these three steps into a routine that solves $ A x = b $ in a computationally efficient way.

Be sure to make a copy!!!!

Preliminaries

Here is a list of laff routines that you might want to use in this notebook:

-

laff.trsv( uplo, trans, diag, A, b )Solves $Ax = b$ where $x$ and $b$ are vectors. -

laff.trsm( uplo, trans, diag, A, B )Solves $AX = B$ where $X$ and $B$ are matrices. -

laff.gemm( alpha, A, B, beta, C )$C := \alpha A B + \beta C$

These three methods were added to the laff library after the course started. If you're having problems with git and are manually downloading the notebooks, check the wiki page for notebooks for help on getting updated laff routines as well.

And last but not least, copy and paste your method from 6.3 Solving A x b via LU factorization and triangular solves into the box below. We'll be using it during this notebook. Recall that it overwrites $A$ with $L$ in the strictly lower triangular part and $U$ in the upper triangular part. Make sure you call the routine LU_unb_var5

import flame

import laff

def LU_unb_var5(A):

ATL, ATR, \

ABL, ABR = flame.part_2x2(A, \

0, 0, 'TL')

while ATL.shape[0] < A.shape[0]:

A00, a01, A02, \

a10t, alpha11, a12t, \

A20, a21, A22 = flame.repart_2x2_to_3x3(ATL, ATR, \

ABL, ABR, \

1, 1, 'BR')

#------------------------------------------------------------#

laff.invscal( alpha11, a21 ) # a21 := a21 / alpha11

laff.ger( -1.0, a21, a12t, A22 ) # A22 := A22 - a21 * a12t

#------------------------------------------------------------#

ATL, ATR, \

ABL, ABR = flame.cont_with_3x3_to_2x2(A00, a01, A02, \

a10t, alpha11, a12t, \

A20, a21, A22, \

'TL')

flame.merge_2x2(ATL, ATR, \

ABL, ABR, A)

Now, let's create a matrix $ A $ and right-hand side $ b $

import numpy as np

import laff

import flame

# Form the matrices

m = 200

L = np.matrix( np.tril(np.random.rand(m,m), -1 ) + np.eye(m) )

U = np.matrix( np.triu(np.random.rand(m,m) ) + np.eye(m) * m )

A = L * U

# Create a large, random solution vector x

x = np.matrix( np.random.rand(m,1) )

# Store the original value of x

xold = np.matrix( np.copy( x ) )

# Create a solution vector b so that A x = b

b = A * x

# Later, we are also going to solve A x = b2. Here we create that b2:

x2 = np.matrix( np.random.rand(m,1) )

b2 = A * x2

# Set the block size, we'll use it later.

# Since we're just messing around with blocked algorithms,

# we set this totally arbitrarily

nb = 20

Implement the blocked LU factorization routine from 6.4.1

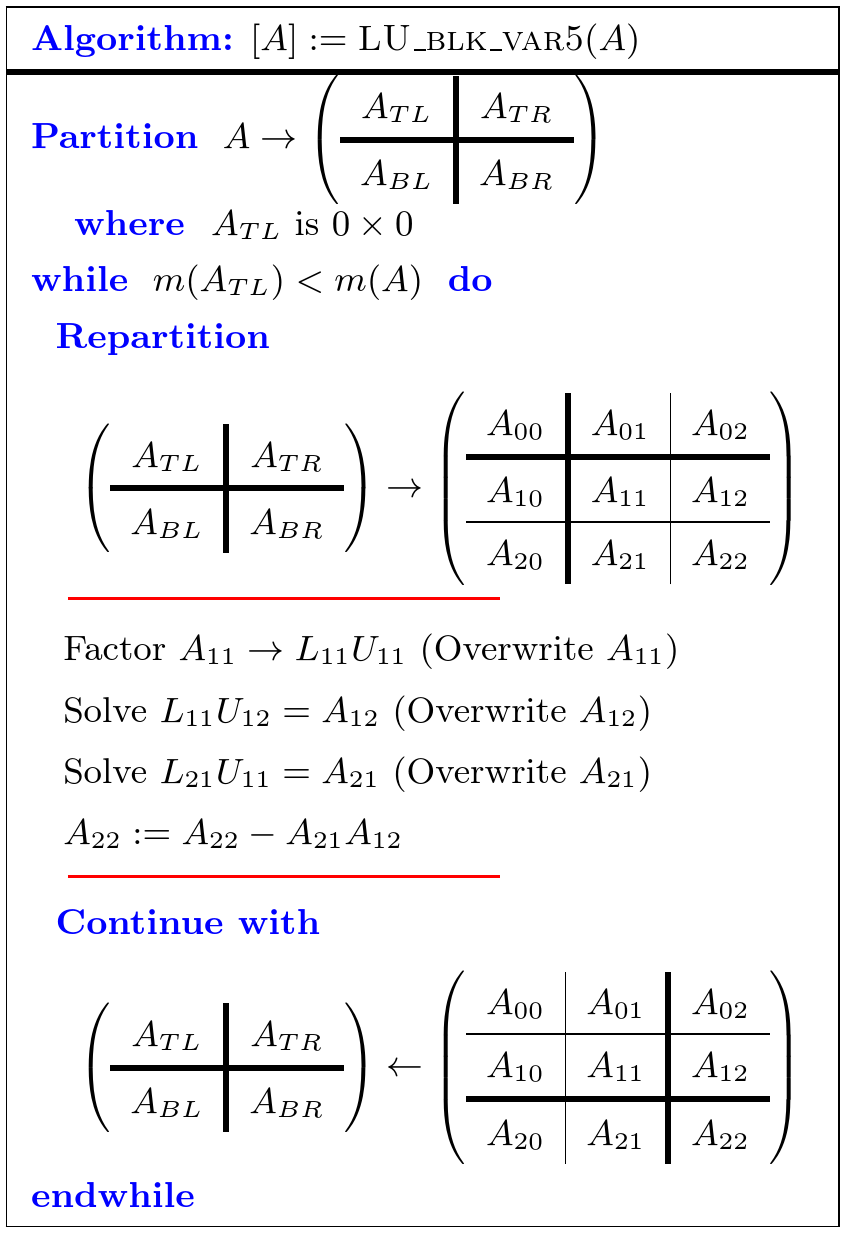

Here is the algorithm:

Important: if you make a mistake, rerun ALL cells above the cell in which you were working before you rerun the one in which you are working.

Create the routine

LU_blk_var5( A )

with the Spark webpage for the algorithm given above.

import flame

import laff

def LU_blk_var5(A, nb_alg):

ATL, ATR, \

ABL, ABR = flame.part_2x2(A, \

0, 0, 'TL')

while ATL.shape[0] < A.shape[0]:

block_size = min(ABR.shape[0], nb_alg)

A00, A01, A02, \

A10, A11, A12, \

A20, A21, A22 = flame.repart_2x2_to_3x3(ATL, ATR, \

ABL, ABR, \

block_size, block_size, 'BR')

#------------------------------------------------------------#

LU_unb_var5( A11 )

laff.trsm( 'Lower triangular', 'No transpose', 'Unit diagonal', A11, A12 )

laff.trsm( 'Upper triangular', 'Transpose', 'Nonunit diagonal', A11, A21 )

laff.gemm( -1.0, A21, A12, 1.0, A22 )

#------------------------------------------------------------#

ATL, ATR, \

ABL, ABR = flame.cont_with_3x3_to_2x2(A00, A01, A02, \

A10, A11, A12, \

A20, A21, A22, \

'TL')

flame.merge_2x2(ATL, ATR, \

ABL, ABR, A)

Test the routine

Note that the code you generated using Spark has two input parameters, A and nb_alg. This nb_alg is the block size that you want to use to do your blocked LU decomposition, we'll set it arbitrarily to 20 for now and store it in the variable nb.

Important: if you make a mistake, rerun ALL cells above the cell in which you were working before you rerun the one in which you are working.

# recreate matrix A

A = L * U

# recreate the right-hand side

b = A * xold

# apply blocked LU to matrix A

# remember nb holds our block size

LU_blk_var5( A, nb )

Compare the overwritten matrix, $ A $, to the original matrices, $ L $ and $ U $. The upper triangular part of $ A $ should equal $ U $ and the strictly lower triangular part of $ A $ should equal the strictly lower triangular part of $ L $. If this is the case, the maximum value in the matrix $A - L - U$ should be close to zero.

Important: if you make a mistake, rerun ALL cells above the cell in which you were working before you rerun the one in which you are working.

# Compare A to the original L and U matrices

print( 'Maximum value of (A - L - U) after factorization' )

print( np.max( np.abs( A - np.tril(L,-1) - U ) ) ) #The "-1" ignores the diagonal

Implement the routine Solve from 6.3.4 using the blocked LU instead of the regular LU

(if you have not yet visited Unit 6.3.4, do so now!)

This time, we do NOT use Spark! What we need to do is write a routine that, when given a matrix $ A $ and right-hand side vector $ b $, solves $ A x = b $, overwriting $ A $ with the LU factorization and overwriting $ b $ with the solution vector $ x $:

- $ A \rightarrow L U $, overwriting $ A $ with $ L $ and $ U $. Use the Blocked version.

- Solve $ L z = b $, overwriting $ b $ with $ z $.

- Solve $ U x = z $, where $ z $ is stored in vector $ b $ and $ x $ overwrites $ b $.

Important: if you make a mistake, rerun ALL cells above the cell in which you were working before you rerun the one in which you are working.

Create the routine

Solve( A, b )

def Solve( A, b ):

# insert appropriate calls to routines you have written here!

# remember the variable nb holds our block size

LU_blk_var5( A, 1 )

laff.trsv( 'Lower triangular', 'No transpose', 'Unit diagonal', A, b )

laff.trsv( 'Upper triangular', 'No transpose', 'Nonunit diagonal', A, b )

Test Solve

Important: if you make a mistake, rerun ALL cells above the cell in which you were working before you rerun the one in which you are working.

# just to be sure, let's start over. We'll recreate A, x, and b, run all the routines, and

# then compare the updated b to the original vector x.

A = L * U

b = A * x

Solve( A, b )

print( '2-Norm of Updated b - original x' )

print( np.linalg.norm(b - x) )

In theory, b - x should yield a zero vector whose two-norm, $||b -x||_2$, is close to zero...

What if a new right-hand side comes along?

What if we are presented with a new right-hand side, call it $ b_2 $, with which we want to solve $ A x = b_2 $, overwriting $ b_2 $ with the solution? (We created such a $ b_2 $ at the top of this notebook.)

Important: if you make a mistake, rerun ALL cells above the cell in which you were working before you rerun the one in which you are working.

Notice that you can take the matrix $A $ that was modified by Solve and use it with the appropriate calls to laff.trsv:

# insert appropriate calls here.

laff.trsv( 'Lower triangular', 'No transpose', 'Unit diagonal', A, b2 )

laff.trsv( 'Upper triangular', 'No transpose', 'Nonunit diagonal', A, b2 )

print( '2-Norm of updated b2 - original x2' )

print( np.linalg.norm(b2 - x2) )

$||x_2 - b_2||_2$ should be close to zero...