Point cloud generation¶

In this article the algorithm to obtain a 3D point cloud in world coordinates system, from a vector of detected 2D points in the camera image is explained.

Acronyms¶

| Symbol | Description |

|---|---|

| $c$ | Camera coordinate system |

| $w$ | World coordinate system |

| $w_\theta$ | World coordinate system rotated $\theta$ in z coordinate |

| $(u, v)$ | Coordinates of the projection point (px) |

| $K$ | Camera matrix or matrix of intrinsics parameters (px) |

| $f_x, f_y$ | Vertical and horizontal focal lengths (px) |

| $c_x, c_y$ | Vertical and horizontal optical centers (px) |

| $\textbf{x}$ | Coordinates of the projection point normalized |

| $d$ | Laser plane minimum distance (mm) |

| $\textbf{n}$ | Laser plane normal vector normalized |

| $\textbf{X}_c$ | Coordinates of the projection point in $c$ (mm) |

| $R_c$ | World rotation matrix in $c$ |

| $\textbf{t}_c$ | World translation vector in $c$ (mm) |

| $\textbf{X}_{w_\theta}$ | Coordinates of the projection point in $w_\theta$(mm) |

| $R_z(\theta)$ | Rotation matrix in z of $\theta$ |

| $\textbf{X}_w$ | Coordinates of the projection point in $w$ (mm) |

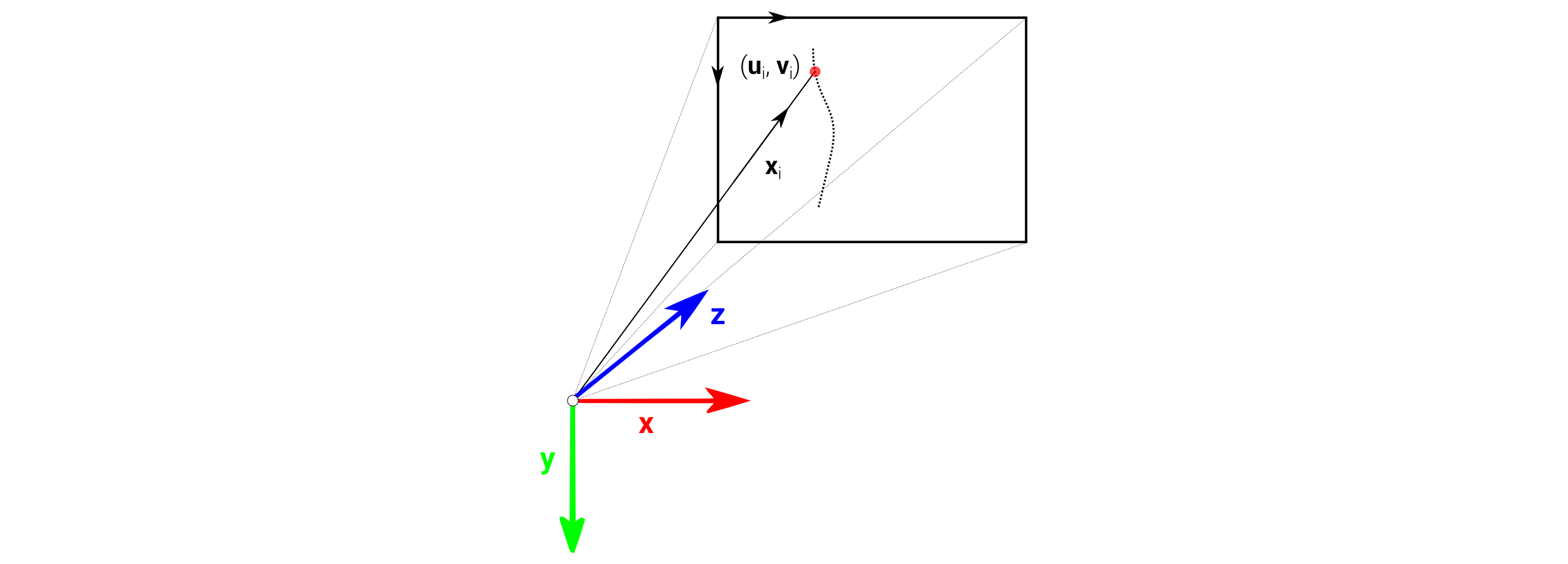

Image coordinates¶

A vector of $(u, v)$ values has been obtained from previous image processing of the laser impact into the object. To simplify it, only one point $(u, v)$ will be used instead of the whole vector. The coordinate $u$ corresponds to the horizontal coordinate and $v$ to the vertical coordinate of the image. The origin of coordinates is in the top-left corner of the image.

import numpy as np

u = 353.21

v = 231.96

Projection point¶

Camera matrix relates the image system with the normalized 3D coordinates. It contains vertical an horizontal focal lengths and optical centers in pixel units.

Using $u$ and $v$ coordinates from the video image where the point is captured, the projection point vector without units can be obtained.

fx = 1430; fy = 1430; cx = 480; cy = 640

x = np.matrix([[(u - cx) / fx], [(v - cy) / fy], [1]])

print(x)

[[-0.08866434] [-0.28534266] [ 1. ]]

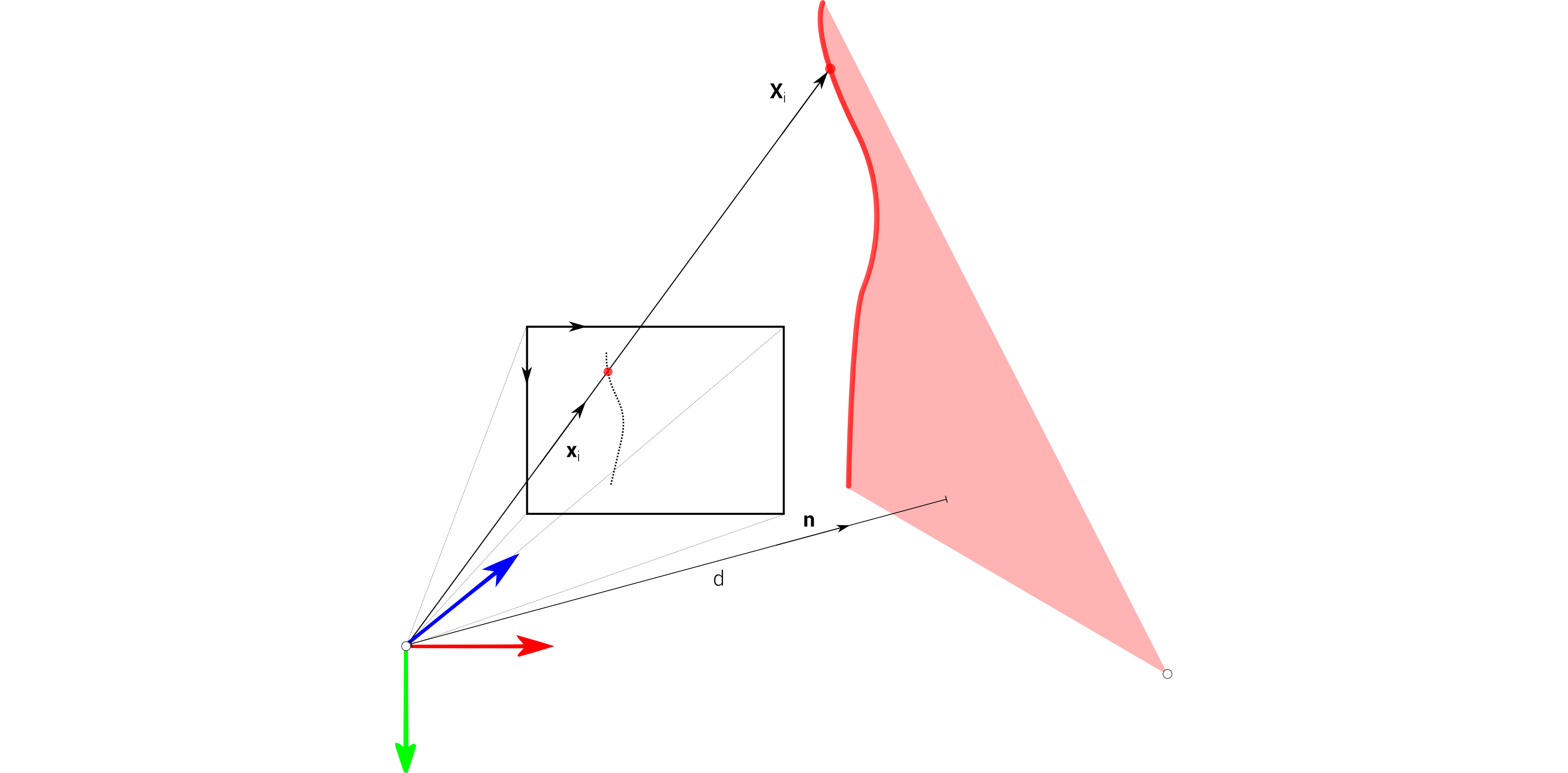

Laser plane intersection¶

Normal vector $\textbf{n}$ and minimum distance $d$ is the minimum information required to define a plane in a three dimensional space. In order to determine the real coordinates of the point in mm, the intersection calculation of the projection point normalized with the corresponding laser plane is needed.

d = 156.11

n = np.matrix([[-0.86952], [-0.020884], [0.493456]])

Xc = (d / float(n.T.dot(x))) * x

print(Xc)

[[ -24.00891089] [ -77.26631436] [ 270.78430923]]

The result is the point in a real 3D space respect to the camera coordinate system, in mm.

Homogeneus transformation¶

The point $\textbf{X}_c$ is transformed to the world inertial system using homogeneus transformation $[R_c, \textbf{t}_c]$

R = np.matrix([[0,1,0],[0,0,-1],[-1,0,0]])

t = np.matrix([[-3],[22],[315]])

Xwo = R.T * Xc - R.T * t

print(Xwo)

[[ 44.21569077] [-21.00891089] [ 99.26631436]]

This is the same point computed in the previous step, but in the world intertial system instead, that is, a system attached to the platform.

World rotation¶

Finally, the point is transformed to a fixed common system. To do that, the performance of another transformation from the inertial system to the world fixed coordinate system is needed. In Ciclop, this is a rotation in common z axis of $\theta$, the current angle in the scanning process.

theta = -37.35 * np.pi / 180.0

c = np.cos(theta)

s = np.sin(theta)

Rz = np.matrix([[c, -s, 0], [s, c, 0], [0, 0, 1]])

Xw = Rz * Xwo

print(Xw)

[[ 22.40327826] [-43.52579431] [ 99.26631436]]