from __future__ import print_function

import numpy as np

import matplotlib as mpl

import matplotlib.pyplot as plt

import matplotlib.cm as cm

%matplotlib inline

Machine Learning¶

Machine learning is the science of getting computers to act without telling them to do so. This is where we have got our self-driving cars, effective web search, speech recognition etc.

Machine learning is expanding its reach to various domains.

Spam Detection : When gmail detects your spam mail automatically, it is only the result of machine learning techniques.

** Credit Card Frauds** : Identifying 'unusual' activities on a credit card is often a machine learning problem involving anomaly detection.

** Face Detection ** : When Facebook automatically recognizes faces of your friends in a photo, a machine learning process is what's running in the background.

** Product Recommendation ** : Whether Netflix was suggesting you watch House of Cards or Amazon was insisting you finally buy those Bose headphones, it's not magic, but machine learning.

** Customer segmentation ** : Using the data for usage during a trial period of a product, identifying the users who will go onto subscribe for the paid version is a learning problem.

** Medical Diagnosis ** : Using a database of symptoms and treatments of patients, a popular machine learning problem is to predict if a patient has a particular illness.

Today's lesson will walk you through some of machine learning algorithms and demonstrate some working problems in the real world.

Contents¶

Types of Machine Learning algorithms¶

All of the problems listed above, are not exactly similar to each other. For instance the customer segmentation problem aims to cluster the customers into two sets: the ones who will pay for the version and the ones who won't. The face recognition problem aims to classify a face from amongst your friends list. There are broadly two types of machine learning algorithms.

Supervised learning : For example, when you rate a movie after you view it on Netflix, the suggestion which follows is predicted using a database of your ratings (known as the training data). When the problem is based on continuous variables, (maybe predicting the stock price) then it falls under regression. With class labels (such as in the Netflix problem), the learning problem is called a classification problem.

Unsupervised learning: In the case of unsupervised learning, there is no labeled data set which can be used for making further predictions. The learning algorithm tries to find patterns or associations in the given data set. Identifying clusters in the data (as in the customer segmentation problem) and reducing dimensions of the data falls in the unsupervised category.

Machine Learning in Python¶

Until before 2007, there existed no built-in machine learning package in Python. David Cournapeau (core developer of numpy/scipy) developed Scikit-learn as a part of a Google Summer of Code project. Later, Matthieu Brucher joined in and the first release was made in January 2010. The project now has 30 contributors and many sponsors including Google and Python Software Foundation.

scikit-learn provides a range of supervised and unsupervised learning algorithms via a Python interface (as shown in the Figure below). Some of its dependencies: scipy,numpy,pandas, matplotlib

Today's lesson will go over some of these algorithms. We start at the grassroot level, by first learning how to represent data in scikit-learn.

scikit-learn: Representing Data¶

Most machine learning algorithms implemented in scikit-learn expect data to be stored in a two-dimensional array or matrix. The arrays can be either numpy arrays, or in some cases scipy.sparse matrices. The size of the array is expected to be [n_samples, n_features]

n_samples: Each sample is an item to process (e.g. classify). A sample can be a document, a picture, a sound, a video, an astronomical object, a row in database or CSV file, or whatever you can describe with a fixed set of quantitative traits.n_features: The number of features or distinct traits that can be used to describe each item in a quantitative manner. Features are generally real-valued, but may be boolean or discrete-valued in some cases. The number of features must be fixed in advance. However it can be very high dimensional (e.g. millions of features) with most of them being zeros for a given sample. This is a case where scipy.sparse matrices can be useful, in that they are much more memory-efficient than numpy arrays.

Loading a dataset¶

Before we begin with machine learning, let's start at its very root by understanding how to load a dataset. We are going to start with the iris dataset stored in scikit-learn.

Iris Setosa  Iris Versicolor

Iris Versicolor  Iris Virginica

Iris Virginica

Question

If we want to design an algorithm to recognize iris species, what might the data be?

(Remember: we need a 2D array of size [n_samples x n_features].)

- What would the

n_samplesrefer to? - What might the

n_featuresrefer to?

Remember that there must be a fixed number of features for each sample, and feature number i must be a similar kind of quantity for each sample.

scikit-learn has a very straightforward set of data on these iris species. The data consists of the following:

Features in the Iris dataset:

- sepal length in cm

- sepal width in cm

- petal length in cm

- petal width in cm

Target classes to predict:

- Iris Setosa

- Iris Versicolour

- Iris Virginica

scikit-learn embeds a copy of the iris CSV file along with a helper function to load it into numpy arrays:

from sklearn import datasets

iris = datasets.load_iris() #150 observations of the iris flower with sepal length, sepal width, petal length and petal width

The data is stored in the .data attribute

#iris.data.shape

#iris.target_names

iris.feature_names

['sepal length (cm)', 'sepal width (cm)', 'petal length (cm)', 'petal width (cm)']

This data is four dimensional, but we can visualize two of the dimensions at a time using a simple scatter-plot:

x_index = 0

y_index = 1

# this formatter will label the colorbar with the correct target names

formatter = plt.FuncFormatter(lambda i, *args: iris.target_names[int(i)])

plt.scatter(iris.data[:, x_index], iris.data[:, y_index],

c=iris.target)

plt.colorbar(ticks=[0, 1, 2], format=formatter)

plt.xlabel(iris.feature_names[x_index])

plt.ylabel(iris.feature_names[y_index])

plt.show()

Exercise Change x_index and y_index in the above script and find a combination of two parameters which maximally separate the three classes. This exercise is a preview of dimensionality reduction, which we'll see later.

Other datasets

- Packaged Data: these small datasets are packaged with the scikit-learn installation, and can be downloaded using the tools in sklearn.datasets.load_*

- Downloadable Data: these larger datasets are available for download, and scikit-learn includes tools which streamline this process. These tools can be found in sklearn.datasets.fetch_*

- Generated Data: there are several datasets which are generated from models based on a random seed. These are available in the sklearn.datasets.make_*

datasets.make_circles

<function sklearn.datasets.samples_generator.make_circles>

import pylab as pl

mpl.rcParams['figure.figsize']=[16,5.4]

digits = datasets.load_digits()

fig, (ax0, ax1, ax2) = plt.subplots(ncols=3, sharex=True)

ax0.matshow(digits.images[0])

ax1.matshow(digits.images[0], cmap=pl.cm.gray_r)

ax2.imshow(digits.images[2], cmap=pl.cm.gray_r)

fig.show()

/Users/lrao/Library/Enthought/Canopy_64bit/User/lib/python2.7/site-packages/matplotlib/figure.py:371: UserWarning: matplotlib is currently using a non-GUI backend, so cannot show the figure "matplotlib is currently using a non-GUI backend, "

Estimator

Every algorithm is exposed in scikit-learn via an ''Estimator'' object. For instance a linear regression is implemented as follows:

from sklearn.linear_model import LinearRegression

Estimator parameters: All the parameters of an estimator can be set when it is instantiated, and have suitable default values:

model = LinearRegression(normalize=True)

print (model.normalize)

True

print (model)

LinearRegression(copy_X=True, fit_intercept=True, normalize=True)

Estimated Model parameters: When data is fit with an estimator, parameters are estimated from the data at hand. All the estimated parameters are attributes of the estimator object ending by an underscore:

mpl.rcParams['figure.figsize']=[6,3]

x = np.array([0, 1, 2])

y = np.array([0, 1, 2])

plt.plot(x, y, 'o')

plt.xlim(-0.5, 2.5)

plt.ylim(-0.5, 2.5)

plt.show()

# The input data for sklearn is 2D: (samples == 3 x features == 1)

X = x[:, np.newaxis]

print (X)

print (y)

[[0] [1] [2]] [0 1 2]

model.fit(X, y)

print (model.coef_)

print (model.intercept_)

[ 1.] 1.11022302463e-16

model.residues_

1.2325951644078309e-32

The model found a line with a slope 1 and intercept 0

Supervised Learning¶

In supervised learning, the computer gets presented with a set of sample data (or "training" data as it is called) along with its output. The idea is to develop a rule or a model that maps the sample output to the input. So, when the computer is presented with a new input, it can classify it into a particular category. For example, spam filtering is an instance where the learning algorithm is presented with emails messages labeled beforehand as "spam" or "not spam", to produce a computer program that labels unseen messages as either spam or not.

Essentially we have a dataset consisting of both features and labels. The task is to construct an estimator which is able to predict the label of an object given the set of features.

Supervised learning is further broken down into two categories, classification and regression. In classification, the label is discrete, while in regression, the label is continuous.

scikit-learn supports a number of supervised learning algorithms, and we will try to elucidate using examples using the following:

- KNN (K Nearest Neighbours)

- Linear Regression

- Support Vector Machines

Classification¶

A classification algorithm may be used to draw a dividing boundary between the two clusters of points:

from sklearn import neighbors, datasets

iris = datasets.load_iris()

X, y = iris.data, iris.target

# create the model

knn = neighbors.KNeighborsClassifier(n_neighbors=2)

# fit the model

knn.fit(X, y)

# What kind of iris has 3cm x 5cm sepal and 4cm x 2cm petal?

# call the "predict" method:

result = knn.predict([[3, 5, 4, 2],])

print(iris.target_names[result])

['versicolor']

OLS fits a linear model by evaluating the coefficients $\alpha= (\alpha_1,\alpha_2,...\alpha_n)$ to minimize the residual sum of squares between the observed responses in the dataset, and the responses predicted by the linear approximation. Mathematically it solves a problem of the form: $$\min_{\alpha} {\|{X\alpha - y}} \| \text{.}$$

from sklearn import linear_model

clf = linear_model.LinearRegression()

clf.fit ([[0, 0], [1, 1], [2, 2]], [0, 1, 2])

LinearRegression(copy_X=True, fit_intercept=True, normalize=False)

clf.coef_

array([ 0.5, 0.5])

# Create some simple data

import numpy as np

np.random.seed(0)

X = np.random.random(size=(20, 1))

y = 3 * X.squeeze() + 2 + np.random.normal(size=20)

# Fit a linear regression to it

model = LinearRegression(fit_intercept=True)

model.fit(X, y)

print ("Model coefficient: %.5f, and intercept: %.5f"

% (model.coef_, model.intercept_))

# Plot the data and the model prediction

X_test = np.linspace(0, 1, 100)[:, np.newaxis]

y_test = model.predict(X_test)

plt.plot(X.squeeze(), y, 'x')

plt.plot(X_test.squeeze(), y_test);

Model coefficient: 3.93491, and intercept: 1.46229

The model has been learned from the training data, and can be used to predict the result of test data: here, we might be given an x-value, and the model would allow us to predict the y value.

Support vector machines (SVMs) are a set of supervised learning methods used for classification, regression and outliers detection.

They are particularly advantageous in the following situations :

- High dimensional spaces

- Number of dimensions greater than the number of samples

- Memory efficient

- Versatile

The support vector machines in scikit-learn support both dense (numpy.ndarray) and sparse (any scipy.sparse) sample vectors as input. However, to use an SVM to make predictions for sparse data, it must have been fit on such data.

from sklearn import svm

clf = svm.LinearSVC()

clf.fit(iris.data, iris.target) #learn from the data

LinearSVC(C=1.0, class_weight=None, dual=True, fit_intercept=True,

intercept_scaling=1, loss='l2', multi_class='ovr', penalty='l2',

random_state=None, tol=0.0001, verbose=0)

clf.predict([[ 5.0, 3.6, 1.3, 0.25]])

array([0])

clf.coef_ #Access the coefficients

from sklearn import svm

svc = svm.SVC(kernel='linear')

svc=svc.fit(iris.data, iris.target)

svc.coef_

array([[-0.04625854, 0.5211828 , -1.00304462, -0.46412978],

[-0.00722313, 0.17894121, -0.53836459, -0.29239263],

[ 0.59549776, 0.9739003 , -2.03099958, -2.00630267]])

print(__doc__)

import numpy as np

import matplotlib.pyplot as plt

from sklearn import svm, datasets

# import some data to play with

iris = datasets.load_iris()

X = iris.data[:, :2] # we only take the first two features. We could

# avoid this ugly slicing by using a two-dim dataset

y = iris.target

h = .02 # step size in the mesh

# we create an instance of SVM and fit out data. We do not scale our

# data since we want to plot the support vectors

C = 1.0 # SVM regularization parameter

svc = svm.SVC(kernel='linear', C=C).fit(X, y)

rbf_svc = svm.SVC(kernel='rbf', gamma=0.7, C=C).fit(X, y)

poly_svc = svm.SVC(kernel='poly', degree=3, C=C).fit(X, y)

lin_svc = svm.LinearSVC(C=C).fit(X, y)

# create a mesh to plot in

x_min, x_max = X[:, 0].min() - 1, X[:, 0].max() + 1

y_min, y_max = X[:, 1].min() - 1, X[:, 1].max() + 1

xx, yy = np.meshgrid(np.arange(x_min, x_max, h),

np.arange(y_min, y_max, h))

# title for the plots

titles = ['SVC with linear kernel',

'LinearSVC (linear kernel)',

'SVC with RBF kernel',

'SVC with polynomial (degree 3) kernel']

mpl.rcParams['figure.figsize']=[12,12]

for i, clf in enumerate((svc, lin_svc, rbf_svc, poly_svc)):

# Plot the decision boundary. For that, we will assign a color to each

# point in the mesh [x_min, m_max]x[y_min, y_max].

plt.subplot(2, 2, i + 1)

plt.subplots_adjust(wspace=0.4, hspace=0.4)

Z = clf.predict(np.c_[xx.ravel(), yy.ravel()])

# Put the result into a color plot

Z = Z.reshape(xx.shape)

plt.contourf(xx, yy, Z, cmap=plt.cm.Paired, alpha=0.8)

# Plot also the training points

plt.scatter(X[:, 0], X[:, 1], c=y, cmap=plt.cm.Paired)

plt.xlabel('Sepal length')

plt.ylabel('Sepal width')

plt.xlim(xx.min(), xx.max())

plt.ylim(yy.min(), yy.max())

plt.xticks(())

plt.yticks(())

plt.title(titles[i])

plt.show()

Automatically created module for IPython interactive environment

Unsupervised learning¶

In the case of unsupervised learning, the idea is to find a hidden pattern in the data. In a sense, you can think of unsupervised learning as a means of discovering labels from the data itself. Unsupervised learning comprises tasks such as dimensionality reduction, clustering, and density estimation.

Approaches to unsupervised learning are supported in scikit-learn such as:

- K-means clustering

- Principal Component Analysis

- Neural networks

K-means Clustering¶

Clustering is the task of grouping a set of objects together such as they are similar to one another in some way. The simplest clustering algorithm is k-means. This divides a set into k clusters, assigning each observation to a cluster so as to minimize the distance of that observation (in n-dimensional space) to the cluster’s mean; the means are then recomputed. This operation is run iteratively until the clusters converge, for a maximum for max_iter rounds.

print(__doc__)

# Code source: Gaël Varoquaux

# Modified for documentation by Jaques Grobler

# License: BSD 3 clause

import numpy as np

import matplotlib.pyplot as plt

from mpl_toolkits.mplot3d import Axes3D

from sklearn.cluster import KMeans

from sklearn import datasets

np.random.seed(5)

centers = [[1, 1], [-1, -1], [1, -1]]

iris = datasets.load_iris()

X = iris.data

y = iris.target

estimators = {'k_means_iris_3': KMeans(n_clusters=3),

'k_means_iris_8': KMeans(n_clusters=8),

'k_means_iris_bad_init': KMeans(n_clusters=3, n_init=1,

init='random')}

fignum = 1

for name, est in estimators.items():

fig = plt.figure(fignum, figsize=(4, 3))

plt.clf()

ax = Axes3D(fig, rect=[0, 0, .95, 1], elev=48, azim=134)

plt.cla()

est.fit(X)

labels = est.labels_

ax.scatter(X[:, 3], X[:, 0], X[:, 2], c=labels.astype(np.float))

ax.w_xaxis.set_ticklabels([])

ax.w_yaxis.set_ticklabels([])

ax.w_zaxis.set_ticklabels([])

ax.set_xlabel('Petal width')

ax.set_ylabel('Sepal length')

ax.set_zlabel('Petal length')

fignum = fignum + 1

# Plot the ground truth

fig = plt.figure(fignum, figsize=(4, 3))

plt.clf()

ax = Axes3D(fig, rect=[0, 0, .95, 1], elev=48, azim=134)

plt.cla()

for name, label in [('Setosa', 0),

('Versicolour', 1),

('Virginica', 2)]:

ax.text3D(X[y == label, 3].mean(),

X[y == label, 0].mean() + 1.5,

X[y == label, 2].mean(), name,

horizontalalignment='center',

bbox=dict(alpha=.5, edgecolor='w', facecolor='w'))

# Reorder the labels to have colors matching the cluster results

y = np.choose(y, [1, 2, 0]).astype(np.float)

ax.scatter(X[:, 3], X[:, 0], X[:, 2], c=y)

ax.w_xaxis.set_ticklabels([])

ax.w_yaxis.set_ticklabels([])

ax.w_zaxis.set_ticklabels([])

ax.set_xlabel('Petal width')

ax.set_ylabel('Sepal length')

ax.set_zlabel('Petal length')

plt.show()

Automatically created module for IPython interactive environment

Principal Component Analysis(PCA)¶

PCA is extensively used in reducing dimensionality of data. PCA finds the directions in which the data is not flat and it can reduce the dimensionality of the data by projecting on a subspace. It finds the combination of variables that exhibit the maximum variance.

mpl.rcParams['figure.figsize']=[12,5]

np.random.seed(1)

X = np.dot(np.random.random(size=(2, 2)), np.random.normal(size=(2, 200))).T

plt.plot(X[:, 0], X[:, 1], 'og')

plt.axis('equal')

(-3.0, 3.0, -1.0, 1.0)

from sklearn.decomposition import PCA

pca = PCA(n_components=2)

pca.fit(X)

print(pca.explained_variance_)

print(pca.components_)

[ 0.75871884 0.01838551] [[ 0.94446029 0.32862557] [ 0.32862557 -0.94446029]]

mpl.rcParams['figure.figsize']=[12,5]

plt.plot(X[:, 0], X[:, 1], 'og', alpha=0.3)

plt.axis('equal')

for length, vector in zip(pca.explained_variance_, pca.components_):

v = vector * 3 * np.sqrt(length)

plt.plot([0, v[0]], [0, v[1]], '-k', lw=3)

clf = PCA(0.95) # if we only keep 95% of the variance

X_trans = clf.fit_transform(X)

print(X.shape)

print(X_trans.shape)

(200, 2) (200, 1)

X_new = clf.inverse_transform(X_trans)

plt.plot(X[:, 0], X[:, 1], 'og', alpha=0.2)

plt.plot(X_new[:, 0], X_new[:, 1], 'og', alpha=0.8)

plt.axis('equal');

X, y = iris.data, iris.target

from sklearn.decomposition import PCA

pca = PCA(n_components=2)

pca.fit(X)

X_reduced = pca.transform(X)

print ("Reduced dataset shape:", X_reduced.shape)

import pylab as pl

mpl.rcParams['figure.figsize']=[12,3]

pl.scatter(X_reduced[:, 0], X_reduced[:, 1], c=y)

print ("Meaning of the 2 components:")

for component in pca.components_:

print(" + ".join("%.3f x %s" % (value, name)

for value, name in zip(component,

iris.feature_names)))

Reduced dataset shape: (150, 2) Meaning of the 2 components: 0.362 x sepal length (cm) + -0.082 x sepal width (cm) + 0.857 x petal length (cm) + 0.359 x petal width (cm) -0.657 x sepal length (cm) + -0.730 x sepal width (cm) + 0.176 x petal length (cm) + 0.075 x petal width (cm)

X, y = iris.data, iris.target

from sklearn.cluster import KMeans

k_means = KMeans(n_clusters=5, random_state=0) # Fixing the RNG in kmeans

k_means.fit(X)

y_pred = k_means.predict(X)

pl.scatter(X_reduced[:, 0], X_reduced[:, 1], c=y_pred);

Neural Networks¶

In general Neural Networks or Artificial Neural Networks(ANNs) as they are commonly known, are used both in machine learning and pattern recognition.

For example, a neural network for handwriting recognition is defined by a set of input neurons which may be activated by the pixels of an input image. After being weighted and transformed by a function (determined by the network's designer), the activations of these neurons are then passed on to other neurons. This process is repeated until finally, an output neuron is activated. This determines which character was read.

Typically, the ANN is represented by three layers. The hidden layer's job is to transform the inputs into something that the output layer can use. The output of a neuron is a function of the weighted sum of the inputs plus a bias.

A restricted Boltzmann machine (RBM) is a generative stochastic artificial neural network that can learn a probability distribution over its set of inputs. This is the example of unsupervised learning which is supported by scikit-learn.

Color-Space Segmentation Using K-Means Clustering¶

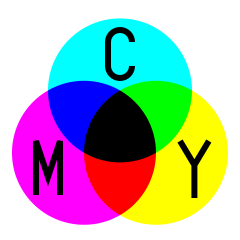

Color is an extremely sophisticated notion. It unifies the physical wavelength of light, the biological expression of cell distribution and pigment receptors in the eye, the neurological interpretation of the resulting optic signal, and the psychological factors of culture and perception[ref]. Unsurprisingly, there are a lot of different ways to represent colors. Color spaces represent different colors according to essentially different orthogonal bases. For instance, you’re probably familiar with $RGB$ v. $CMYK$ color spaces.

|

|

But there are many others, such as $Lab$ and $XYZ$. These color spaces do not necessarily cover the same range of perceptible colors (or imperceptible ones!), but transformations between spaces can still be defined. We will use this below to convert between $RGB$ and $Lab$.

$Lab$ was designed to replicate human vision, and exploits the fact that in a sense there are four fundamental colors that the human eye can perceive: a red-green axis $a$ and a blue-yellow axis $b$. Adding luminosity $L$ to these chromaticity axes yields a three-parameter color space that is actually more expressive than can be represented by $RGB$ triplets.

import numpy as np

import scipy as sp

import matplotlib as mpl

import matplotlib.pyplot as plt

import matplotlib.cm as cm

from sklearn.feature_extraction import image

from sklearn.cluster import spectral_clustering

from sklearn.cluster import KMeans

%matplotlib inline

from colormath.color_objects import LabColor, sRGBColor

from colormath.color_conversions import convert_color

rgbize = np.vectorize(sRGBColor)

convertize = np.vectorize(convert_color)

# Inspired by an example at http://www.mathworks.com/help/images/examples/color-based-segmentation-using-k-means-clustering.html

# Read image and convert it from RGB to Lab representation.

from pylab import imread, imshow, gray, mean

sat_rgb = imread('satellite.png')

plt.figure()

plt.xticks([])

plt.yticks([])

imshow(sat_rgb)

sat_rgb_cs = rgbize(sat_rgb[:,:,0],sat_rgb[:,:,1],sat_rgb[:,:,2])

sat_lab_cs = convertize(sat_rgb_cs, LabColor)

sat_lab = np.ones((sat_lab_cs.shape[0], sat_lab_cs.shape[1], 4))

for i in range(sat_lab_cs.shape[0]):

for j in range(sat_lab_cs.shape[1]):

sat_lab[i,j,0] = sat_lab_cs[i,j].lab_l/200+100 #rgb_r

sat_lab[i,j,1] = sat_lab_cs[i,j].lab_a/200+100 #rgb_g

sat_lab[i,j,2] = sat_lab_cs[i,j].lab_b/200+100 #rgb_b

mpl.rcParams['figure.figsize']=(15,3)

# Full-color image

plt.figure()

plt.subplot(1,4,1)

plt.xticks([])

plt.yticks([])

plt.xlabel(r'$RGB$ Full-Color Image')

imshow(sat_rgb)

# Red channel

cdict = {'red': ((0.0, 0.0, 0.0),

(1.0, 1.0, 1.0)),

'green': ((0.0, 0.0, 0.0),

(1.0, 0.0, 0.0)),

'blue': ((0.0, 0.0, 0.0),

(1.0, 0.0, 0.0))}

BkRd = mpl.colors.LinearSegmentedColormap('BkRd',cdict,256)

plt.subplot(1,4,2)

plt.xticks([])

plt.yticks([])

plt.xlabel(r'$R$')

imshow(sat_rgb[:,:,0], cmap = BkRd)

# Green channel

cdict = {'red': ((0.0, 0.0, 0.0),

(1.0, 0.0, 0.0)),

'green': ((0.0, 0.0, 0.0),

(1.0, 1.0, 1.0)),

'blue': ((0.0, 0.0, 0.0),

(1.0, 0.0, 0.0))}

BkGn = mpl.colors.LinearSegmentedColormap('BkGn',cdict,256)

plt.subplot(1,4,3)

plt.xticks([])

plt.yticks([])

plt.xlabel(r'$G$')

imshow(sat_rgb[:,:,1], cmap = BkGn)

# Blue Channel

cdict = {'red': ((0.0, 0.0, 0.0),

(1.0, 0.0, 0.0)),

'green': ((0.0, 0.0, 0.0),

(1.0, 0.0, 0.0)),

'blue': ((0.0, 0.0, 0.0),

(1.0, 1.0, 1.0))}

BkBl = mpl.colors.LinearSegmentedColormap('BkBl',cdict,256)

plt.subplot(1,4,4)

plt.xticks([])

plt.yticks([])

plt.xlabel(r'$B$')

imshow(sat_rgb[:,:,2], cmap = BkBl)

# Composite Lab image as RGB

plt.figure()

plt.subplot(1,4,1)

plt.xticks([])

plt.yticks([])

plt.xlabel(r'Composite $Lab$ as $RGB$')

imshow(sat_lab, origin='lower')

# L - luminosity

plt.subplot(1,4,2)

plt.xticks([])

plt.yticks([])

plt.xlabel(r'$L$')

imshow(sat_lab[:,:,0], cmap = cm.Greys_r)

# a - red-green axis

cdict = {'red': ((0.0, 0.0, 0.0),

(1.0, 1.0, 1.0)),

'green': ((0.0, 1.0, 1.0),

(1.0, 0.0, 0.0)),

'blue': ((0.0, 0.0, 0.0),

(1.0, 0.0, 0.0))}

RdGr = mpl.colors.LinearSegmentedColormap('RdGr',cdict,256)

plt.subplot(1,4,3)

plt.xticks([])

plt.yticks([])

plt.xlabel(r'$a$')

imshow(sat_lab[:,:,1], cmap = RdGr)

# b - blue-yellow axis

cdict = {'red': ((0.0, 0.0, 0.0),

(1.0, 1.0, 1.0)),

'green': ((0.0, 0.0, 0.0),

(1.0, 1.0, 1.0)),

'blue': ((0.0, 1.0, 1.0),

(1.0, 0.0, 0.0))}

BlYw = mpl.colors.LinearSegmentedColormap('BlYw',cdict,256)

plt.subplot(1,4,4)

plt.xticks([])

plt.yticks([])

plt.xlabel(r'$b$')

imshow(sat_lab[:,:,2], cmap = BlYw)

<matplotlib.image.AxesImage at 0x11272a050>

# Classify each color into clusters using the K-means algorithm.

from sklearn.cluster import KMeans

ab = sat_lab[:,:,1:3];

ab = np.reshape(ab,(sat_lab.shape[0]*sat_lab.shape[1],2));

# How many major colors do you perceive?

n_colors = 3

# Cluster, repeating 10x to avoid local minima.

kmeans = KMeans(n_clusters=n_colors, n_init=10)

cluster_index = kmeans.fit_predict(ab)

# Classify pixels by K-means cluster.

pixel_labels = np.reshape(cluster_index,(sat_lab.shape[0],sat_lab.shape[1]))

mpl.rcParams['figure.figsize']=(5,5)

plt.figure

plt.xticks([])

plt.yticks([])

imshow(pixel_labels, cmap = cm.Blues_r)

<matplotlib.image.AxesImage at 0x11225ca90>

# Segment the original image by color cluster.

mpl.rcParams['figure.figsize']=(16,8)

sat_seg = np.zeros((n_colors,sat_rgb.shape[0],sat_rgb.shape[1],sat_rgb.shape[2]))

for k in range(n_colors):

color_index = np.where(pixel_labels == k)

sat_seg[k,color_index[0],color_index[1]] = sat_rgb[np.where(pixel_labels == k)]

plt.subplot(2,np.ceil(n_colors/2),k+1)

plt.xticks([])

plt.yticks([])

imshow(sat_seg[k])

plt.tight_layout()

Image compression using clustering¶

K-means in the previous example was used to identify polar caps. Another related use of K-means is in image compression. The size of an image usually relates to the number of colors it consists of (as we saw earlier). This example compresses an image by reducing the number of colors used to represent it (5 clusters in this case). The primary purpose of using K-means here is to identify the right 5 clusters so that the image can still be represented efficiently.

from sklearn.datasets import load_sample_image

c1= load_sample_image("china.jpg")

plt.imshow(c1);

import scipy.misc as misc

from sklearn.datasets import load_sample_image

c = load_sample_image("china.jpg")

#plt.imshow(c)

c = c.astype(np.float32)

#plt.imshow(c)

X = c.reshape((-1, 1)) # Reshaping it into an array

k_means = KMeans(n_clusters=5)

k_means.fit(X)

KMeans(copy_x=True, init='k-means++', max_iter=300, n_clusters=5, n_init=10,

n_jobs=1, precompute_distances=True, random_state=None, tol=0.0001,

verbose=0)

values = k_means.cluster_centers_.squeeze()

labels = k_means.labels_

c_compressed = np.choose(labels, values)

c_compressed.shape = c.shape

mpl.rcParams['figure.figsize']=[12,5.5]

fig, (ax0, ax1) = plt.subplots(ncols=2, sharex=True)

ax0.imshow(c)

ax1.imshow(c_compressed)

fig.show()

Credits¶

Lakshmi Rao, Abhishek Sharma, and Neal Davis developed these materials for Computational Science and Engineering at the University of Illinois at Urbana–Champaign.

This content is available under a [Creative Commons Attribution 3.0 Unported License](https://creativecommons.org/licenses/by/3.0/).

This content is available under a [Creative Commons Attribution 3.0 Unported License](https://creativecommons.org/licenses/by/3.0/).